How to Easily Measure Customer Satisfaction

This story originally appeared on Help Scout

There are many ways to get a quantitative look at how your support team is doing, but far fewer to assess the care and thought being put into your replies.

Measuring customer satisfaction thus becomes an easy metric to spin your wheels on. But you shouldn’t throw the baby out with the bath water; for many teams, a simple approach will do.

Here are three questions to answer:

- Overall, are customers’ expectations being met when they talk to support?

- How likely are customers to recommend us to their friends and colleagues?

- How much effort do customers expend when they solve their problems with us?

1. Are customers’ expectations being met?

Having a good read on the overall quality of your support means you need to collect feedback in a large volume across an extended period of time. Making it easy to collect is the only way to go; the easier you make it to give feedback, the more feedback you’ll get.

This is why we built Happiness Ratings right into our help desk—once enabled, customers can rate their service at the bottom of every reply you send. Now you’re able to collect reactions over multiple conversations to get a rough gauge on the delight you’re delivering over the week, month, or quarter.

We purposefully calculate these ratings like the Net Promoter Score. We take the percentage of “Great” ratings and subtract the percentage of “Not Good” ratings to get the Happiness Score.

Once you have enough conversations, you’ll use Reports (above) to get a bird’s eye view of how customers feel about your replies.

2. How likely are customers to recommend us?

The Net Promoter Score—a loyalty measurement approach first put forward byFred Reichheld of Bain & Company—is still a popular and fairly useful way to gain a snapshot of how your company is perceived through customers’ eyes.

As a quick refresher, NPS is based around one question: “Would you recommend XYZ Company to a friend?” Responses are most often collected through a survey that asks participants to rank their likelihood of recommending you on a scale of 1 to 10.

- Those who indicate a 9 or 10 are “promoters”.

- Those who indicate a 7 or 8 are “passive”.

- The rest are “detractors”.

The model is simple: we all want more promoters than detractors. But bear in mind that this score is ephemeral; it’s about understanding current sentiments from a 50,000 foot view.

If your score is negative, then this is a red flag that customers are dissatisfied. If your score is positive, great—keep up the good work. Either way, follow up on some of your ratings; if you don’t understand why your customers rated you the way they did, you’ll be left with no idea of how to improve. Start with asking:

- Why the customer gave you the rating they did.

- What your company could do to get to a 9 or 10. Opinionated customers will have plenty to share.

3. How much “effort’ are customers expending?

Here’s where things get interesting. Matthew Dixon, Karen Freeman, and Nicholas Toman—all from Corporate Executive Board—shook up the customer service world a few years back in a Harvard Business Review article titled Stop Trying to Delight Your Customers.

Armed with a convincing set of data, they sought to prove that extra effort spent on delight was overrated, and that true loyalty comes from reducing customer effort.

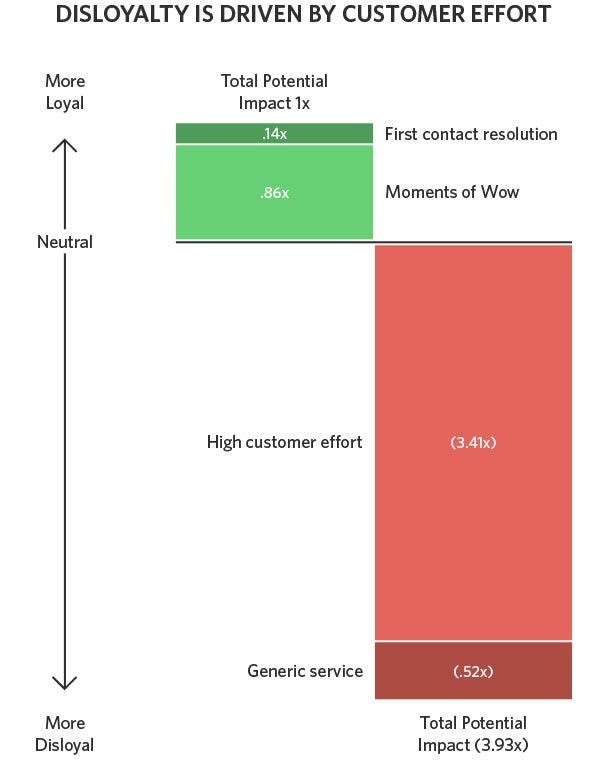

The chart below—adapted from data featured in The Effortless Experience, a book by the same authors—shows the disparity between effort vs. delight:

“Moments of wow” do add a bit of delight, but extra (perceived) effort severely sabotages how loyal customers are to a company. If dealing with you feels like a pain, satisfaction and loyalty take a nosedive, and no amount of delight can save you.

The CEB now recommends gauging your customer effort score by asking customers how easy they felt it was to get the answer they wanted. A seven-option survey is too heavy for day-to-day emails, so a lightweight approach would be to edit your signature to include a single link to “Rate My Reply.” Help Scout users often do this to ask about satisfaction.

But if you wanted to measure effort, it’d be better to ask: “How easy was it to get the help you needed today?” Very Easy, Okay, and Not Easy should work.

Click to Enlarge+

When a customer responds with “Not Easy,” you now have an opportunity to follow up and ask why: “Because I followed your documentation step-by-step and still had to contact you! It was incredibly confusing!” That’s feedback you can dig into and act on.

Having a clear answer for these questions and doing what you can to reduce customer effort across every experience with your support team is a key part of creating true customer satisfaction.

There are many ways to get a quantitative look at how your support team is doing, but far fewer to assess the care and thought being put into your replies.

Measuring customer satisfaction thus becomes an easy metric to spin your wheels on. But you shouldn’t throw the baby out with the bath water; for many teams, a simple approach will do.

Here are three questions to answer: